Every week, vendors announce new AI tools promising autonomous defence and detection pipelines that operate faster than any human team. For many mid-sized businesses, especially those under pressure to reduce costs and address talent shortages, these promises are tempting. If AI can classify threats, prioritise alerts, and even initiate containment, do we even still need human analysts?

Inside a Security Operations Center, the answer is yes. This is what our SOC leaders have to say:

AI is changing the way we detect and respond to threats. Still, it has not changed the fundamental reality of cybersecurity: attacks unfold in ambiguous environments where context determines everything. Models can correlate patterns, but they cannot interpret them. They generate probabilities. And when the risk involves business-critical systems, sensitive data, regulatory obligations, and human livelihoods, probabilities are not enough.

In this article, we offer an inside view into the SOC, using operational data and a use case, to illustrate why human judgment remains indispensable, even as AI becomes a core part of modern defence.

The growing pressure inside the SOC

To understand why analysts matter, we first need to acknowledge the scale of the challenge they face. According to The State of AI in Security Operations (2025), analysts collectively handle an average of 960 alerts every single day. In larger enterprise environments, this number can exceed 3,000, spread across approximately 30 different security tools. Each tool has its own logic, detection rules, and telemetry. Each generates noise as well as insight. And each adds to the operational weight a SOC must manage.

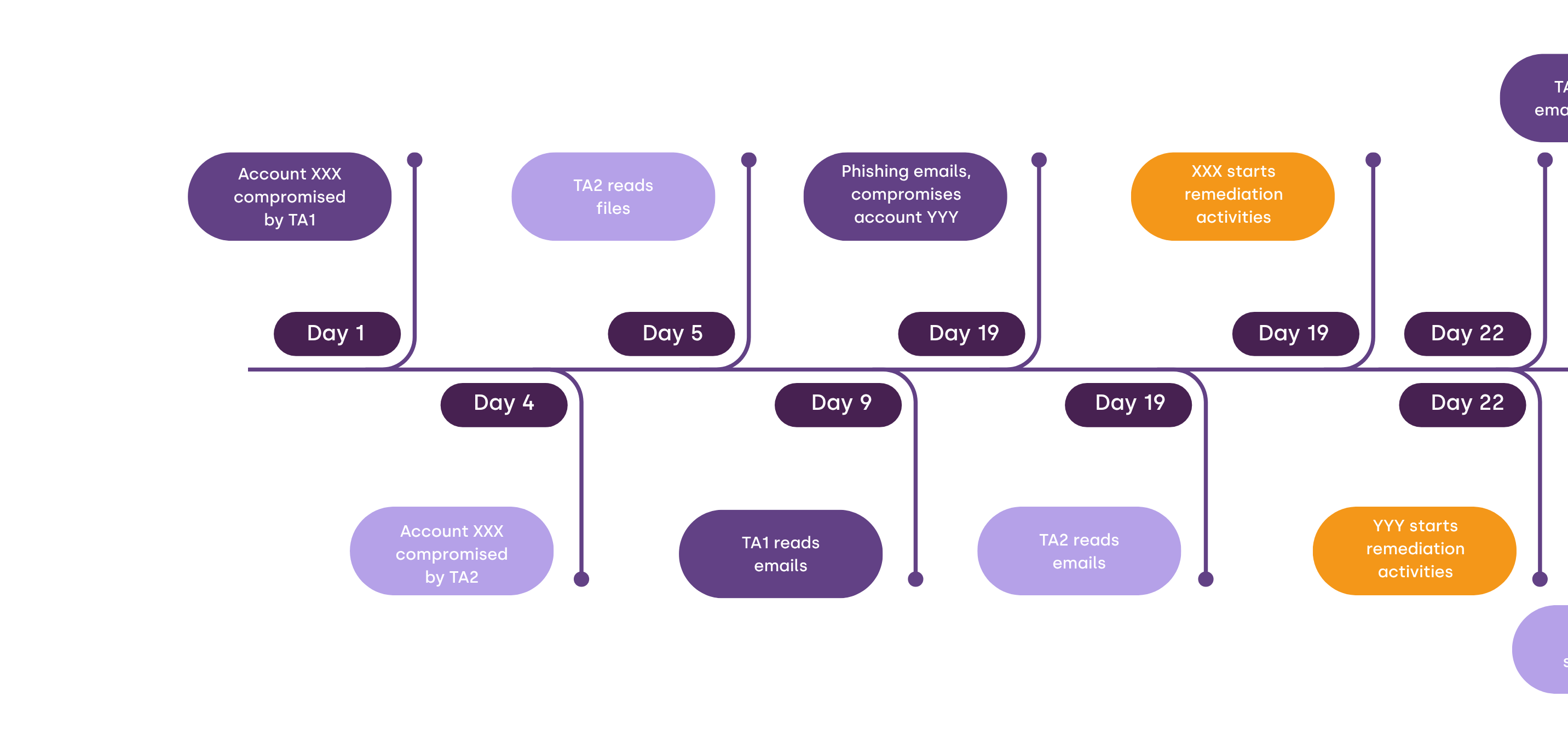

This pressure creates a measurable performance gap. On average, it takes 56 minutes before an alert is even reviewed. A proper investigation can require 70 minutes or more, depending on the environment and the context needed to understand what happened. Meanwhile, attackers, especially those using automated and AI-assisted techniques, move dramatically faster. In business email compromise scenarios, for example, lateral movement can begin within 48 minutes. This leaves only a small window between detection and decision.

AI is essential for narrowing that gap. It reduces noise, accelerates correlation, and highlights the anomalies worth examining. But even with powerful AI support, the SOC’s most valuable asset remains discernment. Below is a small use case to illustrate this point.

The PowerShell investigation: a case study in human context

In what follows, we illustrate the point we make above. Let us take the example of a high-confidence alert involving suspicious PowerShell activity. The system flags a script downloaded from an external IP, stored locally, executed with ExecutionPolicy Bypass, and configured for persistence through a scheduled task. The automated detection engine classifies the pattern as malicious with high confidence (85 %), mapped it to MITRE ATT&CK techniques (T1059.001, T1105, T1053.005), and even identified an IOC hit for the external IP.

Image 1. The script in detail

The alert chain appears flawless. Any automated SOC would almost certainly escalate or even initiate containment.

But then come the questions AI could not answer:

- Is update.ps1 a legitimate internal maintenance script?

- Was the host in an authorised maintenance window?

- Is the user permitted to run administrative scripts?

- Could this activity belong to an automated deployment process?

AI cannot answer these because they require context, that is, an understanding of people, systems, and business operations. This is where the human-in-the-loop is irreplaceable.

It takes a human analyst familiar with typical enterprise behaviour, common tooling, and regular operational patterns to recognise that the script belongs to PowerShell, a legitimate internal tool used by the customer's IT provider. Nothing in the detection logic could have known that. That analyst needed five minutes to validate the context. No AI, signature, or automation could have recognised this nuance.

Without human oversight, this benign administrative process would have been escalated as a high-severity incident. Worse, if automated response had been enabled, legitimate systems could have been isolated or disrupted unnecessarily.

And whereas AI is excellent in revealing patterns, a human analyst must be present to interpret meaning. Here we come to our second point.

Why autonomous, AI-orchestrated cyber defence remains out of reach

The idea of a self-driving SOC is attractive. In theory, a completely automated system would detect threats instantly, correlate every indicator, and trigger containment without human input. But real-world environments are more complex than that. For now, AI continues to struggle with ambiguity and is not very good at handling exceptions. Also, it produces false positives that look dangerously credible. Further still, AI still has difficulties understanding why something is happening. That is, AI can tell us what happened but cannot reliably tell us why it happened, whether it is normal, or whether acting on it will break the environment.

Let us have a closer look. Large Language Models (LLMs) cannot fully explain their outputs, or at least, the science behind why a particular output was generated remains unclear. Machine Learning models, by contrast, can provide some explanations. At the very least, decisions can be traced back to the training data and the patterns they learned.

What holds true for both approaches, however, is that they lack context about the environment in which they are executed. In theory, this means it would be possible to build a fully autonomous, self-driving SOC, where agents act solely on the information available to them. In practice, however, this is highly complex task that requires careful tuning. This will mean ensuring that the full chain from detection to response is supported by high-quality data and that thorough, continuous data training is in place to assist in minimising false positives and false negatives.

In practice, even with sophisticated measures in place, three major barriers prevent autonomous defence from being reliable:

- Most enterprise environments are complex and ambiguous

- In SMB settings, legacy systems, inconsistent configurations, and custom scripts create behaviours that superficially resemble malicious patterns

- AI hallucinations and model drift may create new risks

And there are deeper issues beneath the surface:

- AI may produce false positives that look dangerously credible.

- Scripts, automation tools, and legitimate admin behaviour often resemble attack patterns.

- AI produces false negatives with absolute confidence.

- Sophisticated attackers deliberately design activity to mimic baseline patterns.

- AI does not understand business context. It cannot distinguish between a critical production server and a test environment. And if AI cannot articulate why it classified something as malicious, the organisation cannot demonstrate due diligence.

Where SOC analysts bring in value that AI cannot replicate

Inside the SOC, analysts engage in a form of investigative reasoning that AI cannot emulate. They look for patterns, but more importantly, they look for intent. When they detect unusual behaviour, they consider how the specific organisation operates, who the user is, what normal activity looks like, what is happening elsewhere in the environment, and how attackers adapt their tactics.

This kind of contextual and situational awareness is deeply human. It relies on experience built over hundreds of investigations and countless hours of pattern recognition. At times, it is grounded in hard evidence and sometimes, it is rooted in intuition shaped by the analyst's exposure to real intrusions.

Context, judgment, ownership: the qualities that SOC analysts bring in

Human analysts bring qualities no machine can replicate:

- Context. Analysts understand business processes, user behaviour, maintenance cycles, and operational constraints.

- Judgment. They know which detections require immediate containment and which do not.

- Intuition. A subtle deviation from pattern can trigger a deeper investigation, even when models show low confidence.

- Communication. Analysts translate technical events into clear, actionable guidance for customers.

- Accountability. When business operations are on the line, responsibility must rest with human individuals.

The new attack surface: the AI behind the firewall

As organisations adopt generative AI tools, a second challenge emerges. In this scenario, not only are attackers using AI, but internal systems may unintentionally introduce new vulnerabilities. Employees might upload sensitive files into unmanaged LLM interfaces. Development teams may build model-driven workflows without proper security review. Shadow AI becomes a blind spot, and attackers have already begun exploiting weaknesses in corporate models through prompt injection and data poisoning.

This evolution expands the SOC’s responsibilities. Analysts must now understand not only endpoints, identities, and cloud environments but also how internal AI systems behave, how they consume data, and how they influence operations.

Why mid-sized organisations are especially exposed

Large enterprises have the scale to absorb complexity. They employ specialised teams, enforce strict policy controls, and maintain sophisticated detection pipelines. Mid-sized organisations, however, operate under different pressures. IT teams often juggle infrastructure, support, compliance, and security simultaneously. Legacy Active Directory environments remain common, identity hygiene varies, visibility gaps persist. And threat actors actively target environments where detection and decision-making lag behind the speed of exploitation.

AI can help close the gap but cannot eliminate the need for a cybersecurity partner who understands the entire threat landscape and can respond in real time with human judgment.

The Eye Security approach: augmented intelligence instead of automated defence

Given the realities inside the SOC, Eye Security has adopted a clear stance. AI should enhance analysts, not replace them. Our SOC leverages machine learning to surface anomalies, UEBA to understand identity behaviour, and automated correlation to reduce noise. But every meaningful decision, from escalation to containment, is made by a trained analyst who understands the customer's environment.

In practice, this means:

- AI to detect, correlate, and prioritise

- Humans to verify, interpret, and decide

- A 24/7 SOC to act decisively when minutes matter

- A unified platform to reduce complexity and noise

- Expert guidance to avoid blind spots

- Integrated insurance to protect financial resilience

This approach aligns with operational reality and regulatory requirements. It ensures that incidents are identified and understood. And every customer receives guidance tailored to their environment, maturity, and risk appetite.

The future of cybersecurity: AI-powered, human-led

As AI evolves, its role within the SOC will continue to expand. Models will become better at interpreting behaviour. Automation will reduce manual workload even further. Threat intelligence pipelines will become richer and more dynamic. But human leadership will remain the defining force behind effective cyber defence.

Cybersecurity is, and will remain, a human discipline. Its domains are people, processes, trust, and judgment. And whereas technology can highlight anomalies, it is analysts who ultimately understand impact. Technology informs decisions; analysts take responsibility for them.

The organisations that thrive in the age of AI will be those that embrace this form of partnership. They adopt AI for what it does best, processing vast streams of telemetry with extraordinary speed, and rely on human expertise for what matters, that is, interpreting the threat, guiding the response, and protecting continuity.

Cybersecurity is, ultimately, a human discipline wrapped in technology. Trust, accountability, and communication remain at its core.