Employees are leaking sensitive data into personal ChatGPT, Claude and Gemini accounts every day and most organisations have no visibility into it. This is why we built AI Leak Block, a lightweight browser extension that detects when users interact with AI tools with their personal account. When a risky action is detected, it warns the user before data leaves the browser. Our extension is not logging or collecting any data for maximum privacy.

■ For a technical breakdown, read the full write-up.

■ Test the AI Leak Block extension via the Chrome Web Store.

Executive summary

Shadow AI is now a risk every organisation faces every day. Employees routinely use personal AI tools like ChatGPT, Claude, and Gemini without oversight. Data leakage is happening silently. Personal PII GDPR data and other sensitive inputs (sourcecode, contracts, customer data) may be retained or used to train external models. Also, awareness training alone cannot keep up with real-world behavior.- Eye Security’s approach is different: stop the problem at the point of risk by intercepting unsafe AI interactions in real time.

- The AI Leak Block extension: detects personal vs. corporate AI usage and nudges users toward approved tools without logging any data.

- The result: immediate risk reduction, minimal user friction, and a scalable control aligned with how employees actually work.

Shadow AI is already happening

Shadow AI does not announce itself as a problem. It blends into daily work. An employee pastes a contract into ChatGPT to summarise it. A developer drops a piece of code into a Copilot personal account to debug it. A sales team refines messaging using customer data in Gemini. None of these actions feel risky in the moment. But each of them can result in sensitive information leaving the organisation.

This is what makes shadow AI fundamentally different from traditional threats. It is not driven by threat actors. It is driven by employees doing their jobs using personal or free, ungoverned AI accounts. The tools are legitimate, the connections are encrypted, and the intent is positive. And yet, the outcome can still be data exposure.

Recent data highlights the scale of the issue. AI usage within organisations has surged dramatically, with nearly half of users relying on personal accounts in corporate environments. At the same time, a growing number of breaches are being linked to uncontrolled AI usage, often with higher-than-average costs.

Despite this, most organisations still treat shadow AI as a policy issue rather than an active risk surface.

Shadow AI as a new data exfiltration channel

Traditionally, data exfiltration is associated with threat actors extracting information from systems. In the case of shadow AI, employees are voluntarily sending data out of the organisation as part of their workflow.

This makes it:

- continuous rather than episodic

- distributed rather than centralised

- and difficult to detect using conventional methods

Each interaction with an AI tool represents a potential point of exposure. Over time, this creates a steady stream of data leaving the organisation, often without any record or oversight.

- Nearly half of all LLM usage in corporate environments happens via personal accounts

- 97% of those organisations had no effective access controls in place

- Netskope observed a sixfold increase in LLM usage within companies in a single year

- IBM (2025) reports that 1 in 5 organisations has experienced a breach linked to Shadow AI

A practical solution: preventing AI data leakage in the browser

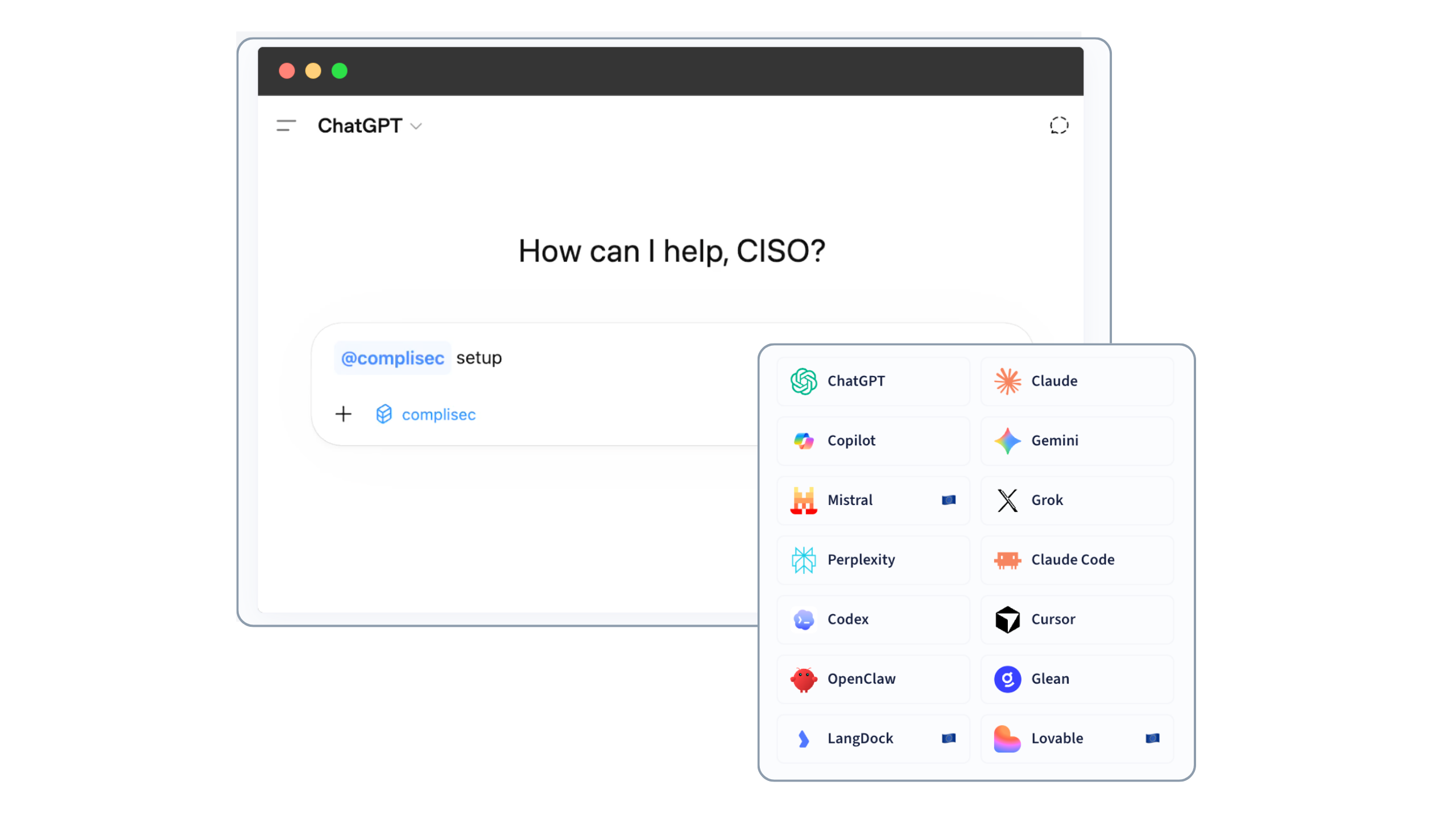

To address this challenge, we developed AI Leak Block, a free browser-based extension designed to detect and prevent risky AI interactions in real time. The focus is on detecting the usage of a free or personal account, like ChatGPT, Copilot and many other AI tools offer to capture users fast. By default, vendors use the data supplied by free accounts to train there new AI models which is the real risk.

AI Leak Block operates directly in the browser, where those actions occur. It monitors interactions with major AI platforms and identifies a free or personal account when a user is about to send a prompt, and will guide that same user to the company environment of that same platform.

.png?width=3712&height=2160&name=AI%20Block%20Browser%20Extension%204%20(1).png)

Image 1. AI Leak Block in action

By combining these signals, the extension can distinguish between approved and unapproved contexts.

Privacy by design: no logging, no data collection

One of the concerns with browser-based controls is the potential for overreach. Monitoring user interactions can easily cross into areas that raise privacy concerns. For that reason, AI Leak Block is designed to operate entirely locally. It does not log prompts, store user input, or transmit any data externally. All detection and decision-making happen within the browser itself (client-side).

This approach ensures that the browser extension protects organisational data without introducing new risks related to monitoring or compliance. It also makes the system transparent and easier to trust.

Why this matters for leadership

For leadership teams, shadow AI represents a shift in how data risk manifests. It is embedded in everyday workflows, driven by tools that employees actively rely on. This makes it both more pervasive and more difficult to manage. Ignoring the problem is not a viable option. As AI usage continues to grow, so does the potential for unintended data exposure. At the same time, restricting access to AI tools would undermine productivity and limit innovation.

The challenge is to enable safe usage at scale. This requires moving beyond policy and toward practical controls that operate where risk actually occurs.

How to start addressing shadow AI today

The first step is recognising that shadow AI is already present within the organisation. It is not an edge case or an exception. It is part of how work gets done. From there, you should ensure that employees have access to secure, enterprise-grade AI tools. If approved tools are not competitive in terms of usability and performance, they will not be adopted. Finally, introducing controls at the browser level provides a direct way to reduce risk. By intervening at the moment data is about to be shared, organisations guide behaviour without relying entirely on policy compliance.

This combination of awareness, enablement, and control creates a robust approach to AI adoption.

Conclusion: from invisible risk to practical control

Shadow AI is not a temporary challenge. It is a structural shift in how work is performed and how data moves through organisations. The organisations that manage this effectively will not be the ones that prevent AI usage altogether. They will be the ones that understand where risk occurs and apply control at that point.

Frequently Asked Questions

What is shadow AI?

Shadow AI refers to the use of AI tools outside approved corporate environments, typically through personal accounts. It becomes a risk when sensitive organisational data is shared without appropriate safeguards.

Why is shadow AI a security risk?

Because data entered into personal AI accounts may be processed, stored, or reused outside the organisation’s control, creating potential exposure of sensitive information.

Is using ChatGPT at work always unsafe?

No. The risk depends on the environment. Corporate AI tools are typically configured with stronger data protection measures, while personal and free accounts may be used to train the next AI model of the vendor.

How does AI Leak Block prevent data leakage?

It intercepts requests in the browser before they are sent to AI platforms and determines whether the user is operating in a personal or corporate context. If not corporate, the user gets a warning to raise awareness of the risks involded. The user can bypass the warning, or with a click of a button be guided to the proper controlled environment instead.

Does the solution collect or store user data?

No. All processing happens locally in the browser. No prompts or user inputs are logged, stored, or transmitted.

Will this disrupt employee workflows?

AI Leak Block is designed to minimise disruption. It only intervenes when necessary and allows users to proceed if the action is appropriate.

Can users bypass the control?

Yes. Users can choose to proceed, ensuring flexibility for legitimate use cases while still reducing overall risk.

How quickly can AI Leak Block be deployed?

Deployment is straightforward via browser extension distribution within Chrome and Edge and can be managed centrally within most organisations.

Does this replace AI usage policies?

No. It complements them by providing real-time enforcement at the point where risk occurs.