AI agents are already writing code, processing data, and automating internal workflows. In many cases, they are operating without a clear understanding of what they are allowed to do. That is not because the models are incapable. It is because they lack context. They do not know which systems are critical, what data is sensitive, what must be logged, or when a decision should involve a human.

Today, we are introducing complisec, an open-source skill suite designed to give AI agents the context they need to operate within those boundaries. It gives AI agents the same compliance context a trained employee would have and enforces it at decision time.

Executive summary

Complisec helps AI agents operate with compliance awareness by default:

- Combines regulatory awareness with operational CISO knowledge drawn from real-world security and compliance work.

- Comes with built-in support for areas such as GDPR, NIS2, incident handling, audit logging, vendor risk, and data residency.

- It is lightweight, portable, and platform-agnostic, working across major AI environments that support skill files or file-based instructions.

- It is free to use, easy to deploy, and designed to be adapted, giving you a strong foundation to tailor to your own risk profile and environment.

Access the full technical write-up here.

Video 1. complisec in action: EU directives lookup

Addressing the missing layer in enterprise AI

Most frontier AI models are built to be broadly useful across many environments. That makes sense, but it also means they are not designed with specific regulatory contexts like GDPR or NIS2 in mind.

For European organisations, this creates a gap. The AI model may generate correct output, but it does not know the constraints it should operate within for your organisation.

Why the compliance layer matters now

AI workflows touch the same areas that are governed by GDPR, NIS2, sector-specific requirements, internal policies, and customer commitments. An agent that processes personal data without context, makes decisions without oversight, or interacts with third parties without logging and escalation can create risk long before anyone notices.

When organisations deploy AI agents, compliance is often deferred. Questions like “Should the agent store this?”, “Should this be escalated?”, or “Can this data leave the EU?” are often not answered upfront.

They surface later, when a board member asks, when an auditor asks, when a customer asks, or when something goes wrong. By then, the agent may already have been operating for months.

This is where complisec comes in

complisec is an open-source compliance skill suite for AI agents, built to give them the practical context they need to operate responsibly in European environments. It gives AI agents a small amount of structured context about the organisation they operate in, and uses that context to guide behaviour at the moment decisions are made.

This includes things like:

-

which systems are considered critical

-

where data is allowed to reside

-

what the organisation’s risk posture looks like

-

which suppliers are in scope

complisec captures this complexity through a lightweight organisation profile. With that in it's memory, the agent can behave differently in situations that matter.

For example, when handling personal data, it can recognise that not all data should be stored or shared by default. When taking an action that affects a critical system, it can flag that this may require logging or escalation rather than continuing automatically.

The goal is not to block the AI agent. The goal is to make sure it operates with the same awareness you would expect from someone working in that environment.

What is included: NIS2, GDPR and beyond

Today, complisec includes one root skill and a set of specialised sub-skills covering areas such as NIS2 gap analysis, GDPR-aware data handling, incident management, change management, vendor risk, audit logging, a compliance hub, and an index of relevant EU directives and national laws.

This means an AI agent can be guided to:

- detect sensitive data and avoid unsafe output

- add proper audit logging to software it builds

- suppliers in NIS2 context

- incident workflows, including NIS2 notification timelines

- data residency and jurisdictional boundaries into account

- change management around critical assets

- support storing crucial logging in a correct and central place

The suite is lightweight: easy to inspect, easy to adapt, and easy to use across multiple AI platforms.

Built from operational experience

complisec is built by Eye Security’s CISO and security teams, based on what we see in real environments. We work with organisations across Europe on incident response, compliance, and security operations. That means we see how frameworks like GDPR and NIS2 play out in practice, especially when something goes wrong.

In those situations, the questions are always the same. What happened, what data was involved, which systems were affected, and who made the decision. If those answers are not available, it becomes very difficult to respond effectively.

That experience shaped how complisec works. Not as a theoretical mapping of regulations, but as a way to bring practical constraints closer to where decisions are made.

Why open source and platform-agnostic matters

complisec is designed to travel with the AI agent. It can be used in major AI environments that support skill files, uploaded instructions, or file-based context. That makes it useful across different teams and use cases, from code generation and security operations to advisory workflows and internal productivity tooling.

Open source also matters. Security and compliance teams need to inspect what is being enforced, review how guidance is expressed, update it as regulation evolves, and adapt it to their own environment. A black-box compliance layer would undermine the very governance it claims to support.

complisec gives teams a strong starting point, not a sealed product. The built-in guidance covers the common 80%. The remaining 20% can be tailored to the organisation’s own systems, sector, escalation rules, and control environment.

Getting started with complisec

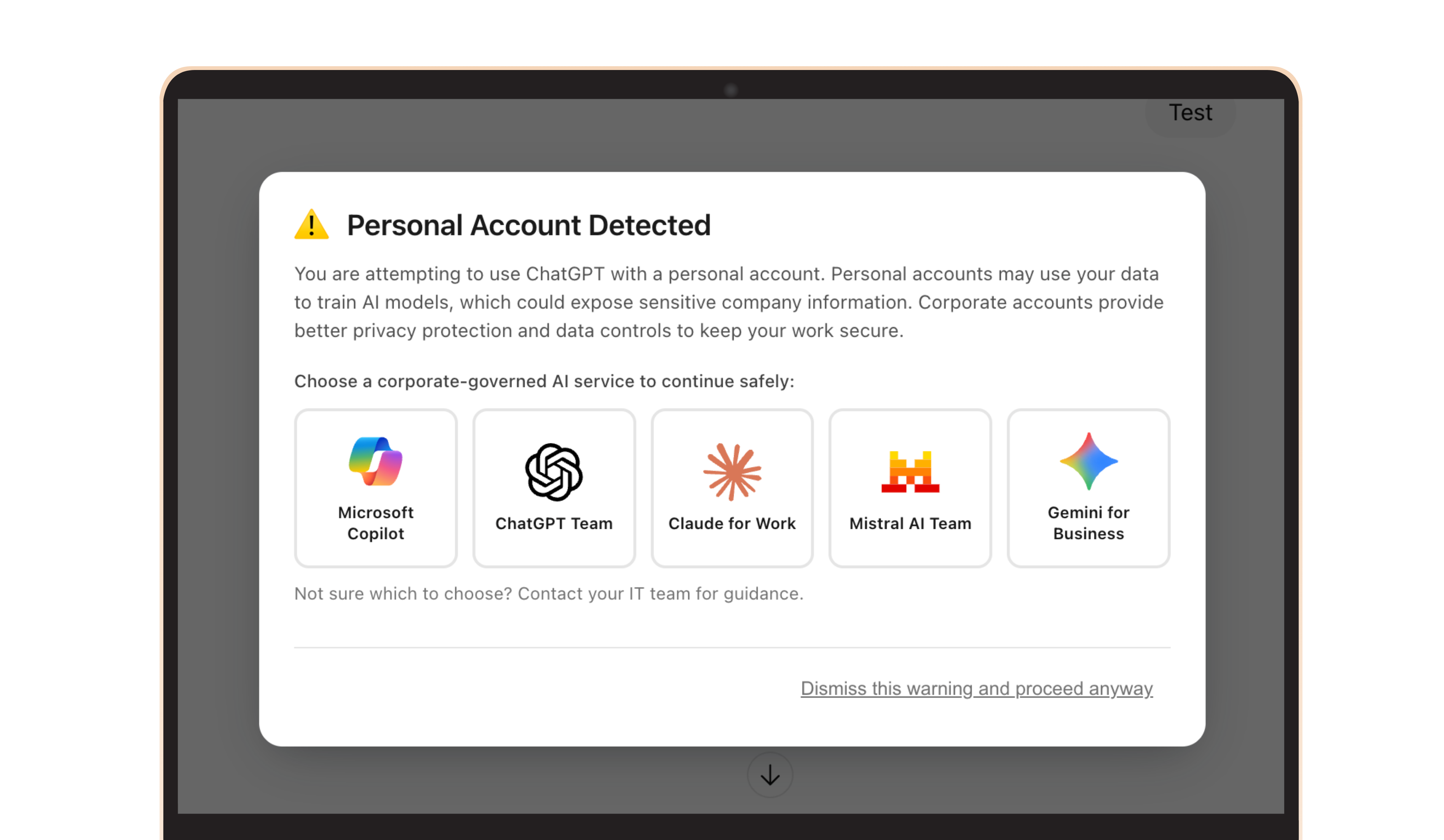

Your IT team can download the skill suite, upload it into your AI environment of choice, and run the setup flow to create your organisation profile. You can also test it out yourself in e.g. ChatGPT.

Video 2. complisec setup

complisec is available as an open-source download. The skill suite can be downloaded as a zip file and uploaded directly into supported AI tools, or its instructions can be referenced in environments that do not support uploads. The project is MIT-licensed, free to use, and intended to be easy to test.

- Landing page: https://skills.eye.security/eu-compliance/

- GitHub: https://github.com/eyesecurity/skills/blob/main/plugins/complisec/README.md

Once installed, an profile will be generated and the agent can start applying compliance awareness during normal work. The aim is not to create a separate compliance process that people forget to follow. The aim is to make compliance part of the workflow itself.

Setting up

- Download or clone the repository

- Upload the skill files into your AI platform (e.g. ChatGPT, Claude, Copilot)

- Configure your organisation profile

- Run the setup command: /complisec setup

Once that profile is in place, the agent can start applying compliance awareness during normal work.

Conclusion: from capability to responsibility

AI agents must become more accountable. This requires context, judgment, and clear limits to understand the boundaries within which AI agents should act.

For European organisations, that boundary is shaped by regulation, internal policy, and operational reality. Today, that context lives in documents, teams, and human expertise, not inside the systems doing the work. That disconnect is where risk emerges.

complisec is a step toward closing that gap. By embedding compliance awareness directly into AI agents, compliance is applied at the moment decisions are made, as part of how the system operates.

Frequently Asked Questions (FAQ)

What is complisec in one sentence?

complisec is a skill that gives AI agents like ChatGPT and Claude Code the context they need to understand what they are allowed to do within your organisation within Europe's regulatory environment. It also provides tools to support the agent to work compliant, and external integrations with official EU directives APIs.

What is a skill?

A skill is a small add-on that teaches AI platforms or systems how to consistently do a specific task or follow a repeatable workflow. A skill is a reusable set of instructions and context; rather than improvising from scratch each time, the AI follows a defined workflow, applies specific rules, and uses supporting guidance to operate more reliably in the situations the skill is designed for.

What makes complisec a skill suite?

complisec is a skill suite because it combines multiple related compliance skills into one coordinated system. Instead of handling just one task, it covers several areas such as GDPR-aware data handling, NIS2 gap analysis, incident response, audit logging, vendor risk, and data residency, giving agents broader compliance awareness.

Is complisec a Claude skill?

complisec works with Claude, but it is not a Claude-only skill. It is an open-source, platform-agnostic skill suite built in a file-based format that can be used across multiple AI environments, including Claude, ChatGPT, Copilot, Cursor, Codex, Mistral, and others that support uploaded skills, instructions, or file-based context.

Why didn’t we build a pure cybersecurity skill?

There are already strong security-focused tools and skills available, and many of those address problems that are largely the same across organisations globally.

We took a different approach. As a European cybersecurity company, we asked ourselves how we could help organisations here adopt AI in a way that fits their regulatory and operational reality, using the expertise we already have in-house. Instead of rebuilding what already exists, we focused on the compliance and context layer. Where relevant, we reference and build on existing security tools and skills within the suite, so they can be used together to support compliant AI workflows.

Who is complisec for?

It is built for European organisations using AI agents in day-to-day operations, especially security teams, IT teams, compliance leaders, and service providers supporting EU clients.

What problem does it solve?

It closes the gap between AI capability and organisational permission by giving agents practical compliance context, escalation logic, and audit guidance.

Which compliance areas does it cover?

It includes support for GDPR-aware data handling, NIS2-related controls and assessments, incident handling, vendor risk, audit logging, data residency, change management, and broader EU regulatory lookups.

Is it legal advice?

No. It is an operational compliance layer designed to support safer agent behaviour. Organisations should still involve legal and compliance stakeholders where needed.

Does it replace human oversight?

No. In many cases, its purpose is the opposite: to help agents recognise when a human needs to be involved.

How difficult is deployment?

It is designed to be lightweight. For most teams, initial setup is a matter of downloading the files, uploading them to the AI platform, and creating the organisation profile.

Who can test and deploy it for my organisation?

Your IT team, MSP or security partner can install complisec for free within any AI platform you support, like Microsoft Copilot, Google Gemini or ChatGPT Team. In the meantime, you can also install the skill in your own agentic chat using the links provided to test it out locally without risk.

Do I need a dedicated compliance team to use it?

No. In fact, it is especially useful for organisations that need practical guardrails without building a full in-house compliance engineering capability from scratch.

Why is the organisation profile important?

Because compliance depends on context. An agent cannot make good decisions using only generic rules; it needs to understand your assets, suppliers, obligations, and risk posture.

Why open source?

Because trust, reviewability, and adaptability matter. Teams need to inspect the guidance, extend it, and update it as their environment changes.

Does complisec work only with one AI provider?

No. It is designed to be platform-agnostic and usable across major AI environments that support file-based skills or instructions.

What is the long-term vision?

To make compliance an embedded property of AI workflows, so agents operate with clear boundaries from the start instead of relying on manual checks after the fact.